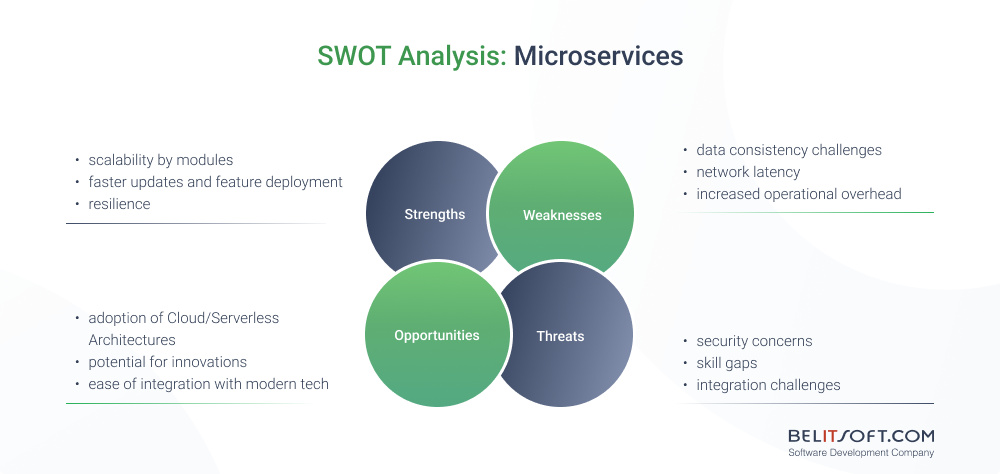

Microservices can offer powerful solutions for large-scale projects, but they're not the perfect fit for every project. Deciding between them and monolithic structures should be case-specific. In this post, I've distilled years of our team's expertise to help you determine what's truly best for your project.

When Businesses Choose Microservices Architecture

Let's first delve into why many businesses today are gravitating towards the microservices approach.

Enterprise Platforms

Imagine you're building a complex healthcare platform or an ERP with various components. Instead of sticking to one tech stack for everything, you select tech stacks according to their effectiveness for each part.

Take Belitsoft's case of developing healthcare CRM with BI implementation. The solution was built on microservices, each with technologies that best suit the operational demands. To ensure efficient performance without over-provisioning resources, our team chose serverless Node.js and Vue.js for the low-load analytical dashboard web platform. The BI module, on the other hand, encounters fluctuating loads and requires Python.

In essence, microservices architecture isn't about adhering to one technology, it’s about using the strengths of multiple tech stacks. The key lies in being flexible and optimizing performance.

Projects requiring rapid deployment of features and updates

A standout advantage of microservices is how it speeds up delivery. Given its modular nature, various teams can concurrently develop different components without interference. If you need to implement 3 different updates, for instance, just roll them out independently once each of them is ready without a system-wide overhaul. Such a setup gives the development team room to try new things without the whole setup coming crashing down.

In addition, in microservices, developers work within their own "box" or microservice when implementing new features. This code isolation prevents changes in one microservice from affecting the entire system. Unlike in monolith, where human presence is evident.

Typically, a smaller team of developers, which may not include the original code writers or even new developers, takes on adding new features and updates. They might find certain sections of the existing code cumbersome and make minor adjustments to simplify the implementation of new features, based on their perspective. However, the problem lies in their limited knowledge of which functionalities and blocks of code rely on the parts they're changing. This usually leads to unintended issues and compromises in the software's functionality.

Services requiring 100% availability

Think about healthcare where uptime is critical since it directly impacts patient care. Trust and financial loss result from even a brief downtime.

In such sectors, opting for a microservices setup is a strategic choice. The trick is that each service operates independently. So if one faces an issue, it doesn't pull the rest down with it. This approach not only keeps systems more available but also introduces a robust layer of fault tolerance.

Software with fluctuating workloads

If you're developing an app with varying traffic expectations across its components, I'd recommend the microservices architecture. An effective way to manage resources is to scale each service separately based on its load, which can help reduce costs while enhancing performance.

Typically, startups and SaaS platforms are saving a lot by paying for actual resource usage. To name a few examples, fintech apps are using microservices architecture to easily scale modules with large daily loads, such as transactions or balance tracking, while not spending resources on modules with low loads (loans, promotions, etc). Elearning platforms may create a separate “box” for BI analytics, the loads on which fluctuate and differ a lot from the core learning system.

Real-Life Case: When Scalability and Modularity of Microservices Shine

Microservices seem to be the secret sauce for businesses aiming for agility and seamless scalability, more so in cloud-native and serverless setups.

Consider a US healthcare startup. They wanted to build a product with the functionality of healthcare CRM and robust BI visualization functionality. So Belitsoft stepped in to help.

- Microservices & AWS Pairing. Belitsoft combined these two powerful technologies, particularly AWS Lambda's serverless SaaS structure, making the most out of AWS's rock-solid security and microservices' modularity. Microservices allowed us to provide the app with the unlimited scalability of its BI module, yet save money on the CRM that consumes little. The result is perfect auto-scaling with wise budget allocation.

- Data Privacy Boost. A central feature of the solution was the SaaS multi-tenant architecture, rooted in microservices. This design guaranteed that each tenant's data remained completely secure and isolated, enhancing data privacy.

- Ace User Management & Security. Microservices helped to refine user management and access control. Integration with AWS Cognito and the Amazon API Gateway streamlined user identity verification and request routing, fortifying the application's security.

- Efficient Data Handling. We set up a dynamic ETL pipeline using AWS Glue, AWS SQS, and Apache Spark. After processing all that data, AWS QuickSight transformed it into intuitive visuals, utilizing pre-established dashboard frameworks.

By using the full potential of both microservices and AWS, Belitsoft gave this startup just what they needed: agility, security, and the ability to scale. It's a great example of how microservices can really make a difference, especially in sectors like healthcare.

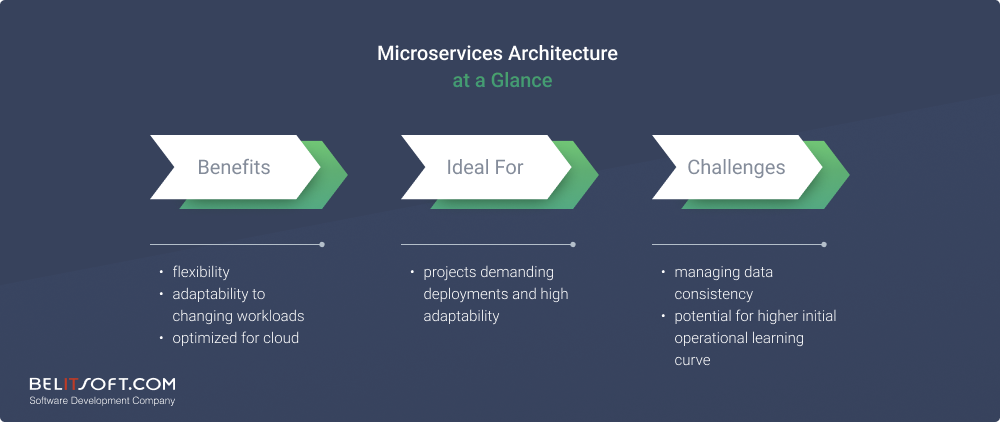

Challenges of Using Microservices Architecture

- Data Consistency. When each service has its own database, things can get complex. It's not just about the decentralized setup. You've got to deal with challenges like syncing up distributed transactions, getting used to the idea that consistency might take a moment ("eventual consistency"), and then there's the potential for sync conflicts.

- Network Latency. With microservices being spread out, there's naturally some lag when services chat with each other. This delay is heightened when data formats change or when calls are chained. Load balancing and service discovery further contribute to this delay.

- Service Orchestration. We've got to make sure they're all in sync, manage how data moves between them, keep an eye on their mutual ties, and ensure they all perform well together, especially when multiple operations are happening at once.

- Increased Operational Overhead. Individual services come with distinct resource requirements, complicating their setup and management. We have to deal with scaling challenges, preventing service conflicts, and ensuring they don't hog resources.

- Security Concerns. As the number of distinct services communicating over a network increases, so does the likelihood of unauthorized access. Encryption becomes essential for secure data exchange between these services. Moreover, using diverse technology stacks for different services and reliance on third-party services or APIs can further expose the system to additional risks.

- Overhead Costs. Although breaking up apps into separate services is great for flexibility and scaling up, they come with cost challenges. Think about the specific infrastructure we need, monitoring, and deployment tools required. And with all services communicating with each other, especially in the cloud, increased network traffic and making sure everything's always available can add up. As the system's complexity rises, more advanced—and often costlier—management tools become necessary. Plus, safeguarding every service is an added expense.

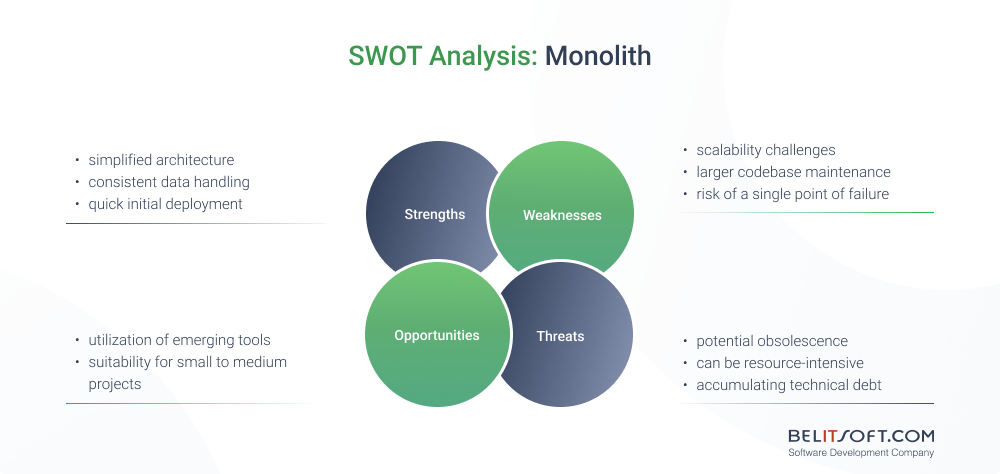

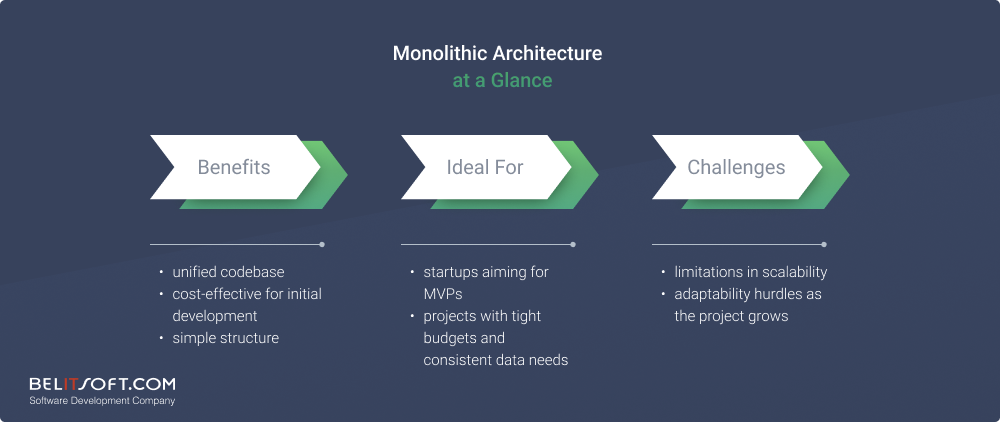

When Businesses Choose Monolithic Architecture

Businesses operating with a limited initial budget

If you're watching your budget closely, looking for a straightforward development and deployment process, I think going monolithic might be a smart move. It provides a one unified codebase, making the development and testing processes smoother. There's no hassle of inter-service coordination, and developers can use specific IDEs meant for monoliths.

For anyone looking to save on deployment costs, it's a dream solution. There's only one server to manage, translating to reduced networking complications and more efficient utilization of memory and CPU. Modern tools like Docker further simplify the process, making a monolithic structure an attractive option from a cost perspective.

Startups launching a quick MVP

Monolithic architectures are akin to an all-in-one solution. The single codebase results in a unified space for development. This alone can save a ton of time, as there's no need to juggle communication between different services. So, for startups eager to roll out a MVP swiftly, this is a game-changer.

When it comes to testing and deployment, the unified nature simplifies things. There is no need to fumble around simulating various external APIs. And if there's a surge in user traffic initially, monoliths can directly scale up to handle that.

Software managing critical data

For software managing sensitive data, such as financial or healthcare records, a monolithic system provides the consistency and security that's paramount. With monolith where all components are in one place, ensuring data consistency and fewer security threats due to limited entry and exit points. This makes audits and compliance checks more straightforward. While microservices offer flexibility and scalability, but when the primary concern is safeguarding data, I prefer a monolithic system. It's particularly fitting for scenarios requiring strict data protection.

Real-Life Case: Reducing Costs by 90% with Monolithic Architecture

Using the monolithic architecture, Prime Video's Audio-Video Monitoring Service showcased the strength of a unified codebase of the monolithic architecture, complemented by specific tools and practices. Here's a snapshot of their strategy:

- Simplicity in Deployment & Scaling. They used Docker for smooth containerization, ensuring a consistent app environment from development to deployment. Add Jenkins to the mix for faster integration and deployment. Regular code check-ins and a modular approach made the entire codebase neat and easy to handle.

- Consistent & Unified Data Management. Prime Video anchored their data storage on PostgreSQL for rock-solid data storage. And for quick data access, Memcached was their go-to tool. With routine database tune-ups, they ensured the data was always spot on.

- Cost Efficiency. AWS EC2 was their cloud of choice, dynamically allocating resources and cutting costs by a whopping 90%! Monitoring how much they used and smart logging made sure no resources went to waste.

- Enhanced Reliability. Monitoring tools, such as Nagios and Grafana, kept an eagle's eye on app performance, detecting potential issues in real-time. Coupled with solid backup plans and rigorous tests, and you've got a system you can count on.

- Streamlined Development & Maintenance. They took advantage of IntelliJ IDEA and Git to make development smooth. SonarQube came into play for quality checks.

So, it's clear that Prime Video's service wasn't just about choosing monolithic; it was all about the right tools and best practices backing it up.

Challenges of Using Monolithic Architecture

- Restricted Scalability. In monolithic architecture, having everything in one big box can be a bit of a pain when we want to scale. Imagine one component of the app experiencing a surge in traffic. In a monolithic setup, you'd need to scale the entire system to accommodate this, which isn't particularly efficient and can escalate costs. Plus, even minor updates require a complete system overhaul, risking more downtime. As we keep adding new features to it, the entire structure just gets more and more complicated to handle. While vertical scaling is an option, it's bound by inevitable hardware constraints.

- Limited Flexibility. Committing to a single tech stack can make it tough to keep up with the latest and greatest in the tech world. If our stack becomes outdated or goes niche, sourcing experts familiar with it can be daunting. Tailoring individual app components can pose additional challenges. Moreover, overreliance on a particular vendor can corner us with escalating costs and potential limitations. And if we ever think of switching things up, it's going to be a major headache, both in effort and cost.

- Large Codebase. As monolithic structures expand, things can get a bit tangled up in the codebase. Quick fixes pile up over time as technical debt. Then, making any change as well as testing becomes a minefield due to the myriad of interdependencies. Frequent updates often necessitate complete system redeployments, posing a risk for potential downtimes. Such constraints can put a drag on being innovative and quick on our feet.

- Single Point of Failure. In monolithic architecture, most things are intricately linked. So, if an issue arises in one part, it can potentially disrupt the entire system. Unlike models where elements operate independently, in a monolith, setbacks aren't isolated. A minor glitch can snowball into a significant system-wide disturbance, jeopardizing stability and potentially impacting business operations. How big of a risk is yet to be determined.

- Resource-intensive. Unlike microservices architecture, where only the active module scales, monoliths necessitate scaling the entire system. This can lead to resource inefficiency, especially when only certain portions of the app experience high traffic. It's like getting one more train when all that's needed is an additional coach.

In addition, having all bundled up together in monolithic systems can make it a real headache for any newbies joining the team. Technically, they've got to wrap their heads around this intertwined codebase and all the tools we've tailored for our monolith. But from the business side of things, it's also tricky. Longer onboarding means more training costs. Given the interconnectivity, mistakes can be costly and hinder product releases, a potential setback in a competitive market.

Can You Mix Both Or Migrate Between Microservices and Monolith?

Amazon's strategy has caught my attention. They're deftly combining the strengths of the classic monolithic architecture with cutting-edge tech tools, a blend of tradition with modernity. While traditional monoliths faced challenges with scalability and maintainability, the integration of tools like Docker, CI/CD pipelines, Prometheus, and Grafana is addressing these concerns.

Can you migrate between the two?

Absolutely. However, migrating from monolith to microservices may be complex. And here is why:

Visualize the monolithic architecture as an intricately woven fabric where every thread is interlinked, meaning a pull on one might disturb the entire cloth. On the flip side, microservices resemble individual patches—distinct yet autonomous. It feels like carefully segmenting this fabric into these separate patches, providing flexibility and scalability.

Yet, shifting in the opposite direction, consolidating microservices into a monolith, is comparatively straightforward and can be beneficial to overlapping functionalities.

And what about using them both?

The middle ground is the hybrid model, a fusion of monolith and microservices. It harnesses the strength of a unified structure while reaping the benefits of modular adaptability.

In conclusion, while both monolithic and microservices architectures have their unique strengths and challenges, the best choice often hinges on the project's specific requirements and long-term vision. There isn't a one-size-fits-all answer. Sometimes, blending, transitioning, or merging these architectural styles may also be advantageous.

Still uncertain about monolith vs. microservices? Reach out to our team! We'll help you navigate the best path tailored to your project.

Our Clients' Feedback

We have been working for over 10 years and they have become our long-term technology partner. Any software development, programming, or design needs we have had, Belitsoft company has always been able to handle this for us.

Founder from ZensAI (Microsoft)/ formerly Elearningforce