ChatGPT API integration

- Chat with your own data with the help of our API integration services.

- Our developers can integrate AI-powered experiences directly into your own enterprise applications for internal or external use.

- We can retrieve relevant information from extensive data at the query time.

- AI-generated answers come from the latest information from your specific data sources for accuracy and relevance, avoiding outdated or incorrect responses.

- We exclusively fine-tune OpenAI models for your use only.

- We guarantee data privacy and isolation, including network isolation and other enterprise-grade security controls.

- Your solution may resemble Web ChatGPT, but will be hosted locally with access to the cloud via REST API.

- We can deploy your model to Virtual Agents for conversational experiences across multiple platforms.

ChatGPT API Integration for Your Business

Time and Cost Savings. By leveraging pre-existing systems like ChatGPT, you bypass the tough challenge of sourcing experienced AI developers to construct a chatbot or conversational AI model from the ground up. This strategic move conserves significant development resources. For a proprietary bot with a unique feature set, consider our custom chatbot development services.

ChatGPT CRM Integration. Microsoft Dynamics CRM has default integration with ChatGPT. Salesforce CRM and HubSpot have developed their own ChatGPT-like generative AI. If your CRM is not on the list but you still want to integrate it with this generative AI, we are here to help. We expertly implement features like a summary of customer email history, previous meetings, and notes with highlighted talk-to-listen ratios, customer sentiments, and competitor mentions. Your sales team will be able to see priority recommendations for their pipelines and quickly generate email replies directly from the email interface.

ChatGPT Angular Integration. Users will be able to talk with ChatGPT within your Angular app through a special chat dialog window. Angular will send their queries to your backend server (you may already have one, or we can build it with a framework like Node.js, Python, etc.). We write TypeScript code for your server that will send requests to the ChatGPT API, using the OpenAI npm package (or a similar library). This code will also forward the response from this model back to your Angular app.

ChatGPT Integration with .NET. Your .NET Core application can support conversational interfaces that interact with OpenAI models capable of understanding and generating text or converting audio into text. REST APIs and libraries are the default way to set this up. Once configured, a digital assistant will answer questions about your business, summarize information from documents in your .NET applications, extract key insights from documents, autocomplete sentences, and more.

Pre-Requisites for ChatGPT API Integration

Step-by-Step Guide to ChatGPT API Integration

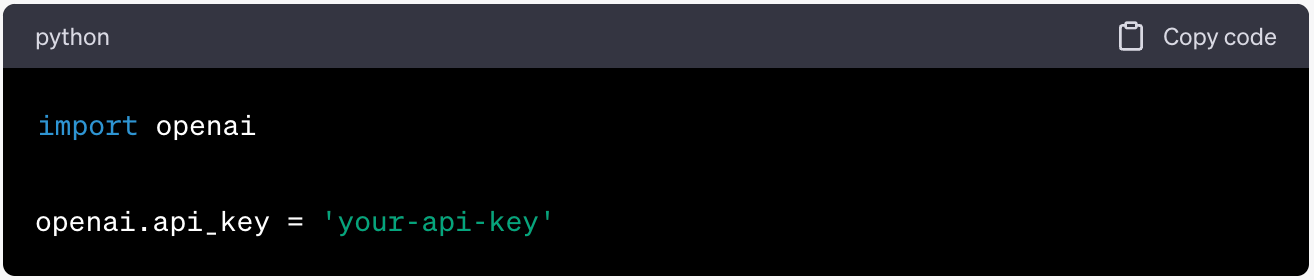

Step 1. Authenticate with OpenAI

Typically, you need to place an API key in the header of each HTTP request you make to OpenAI. Here's a Python example.

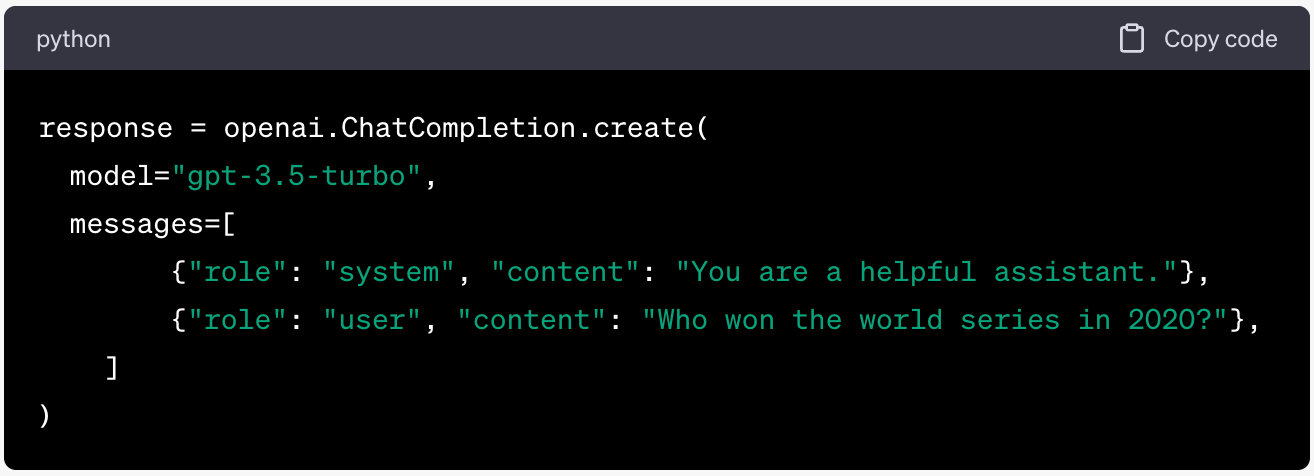

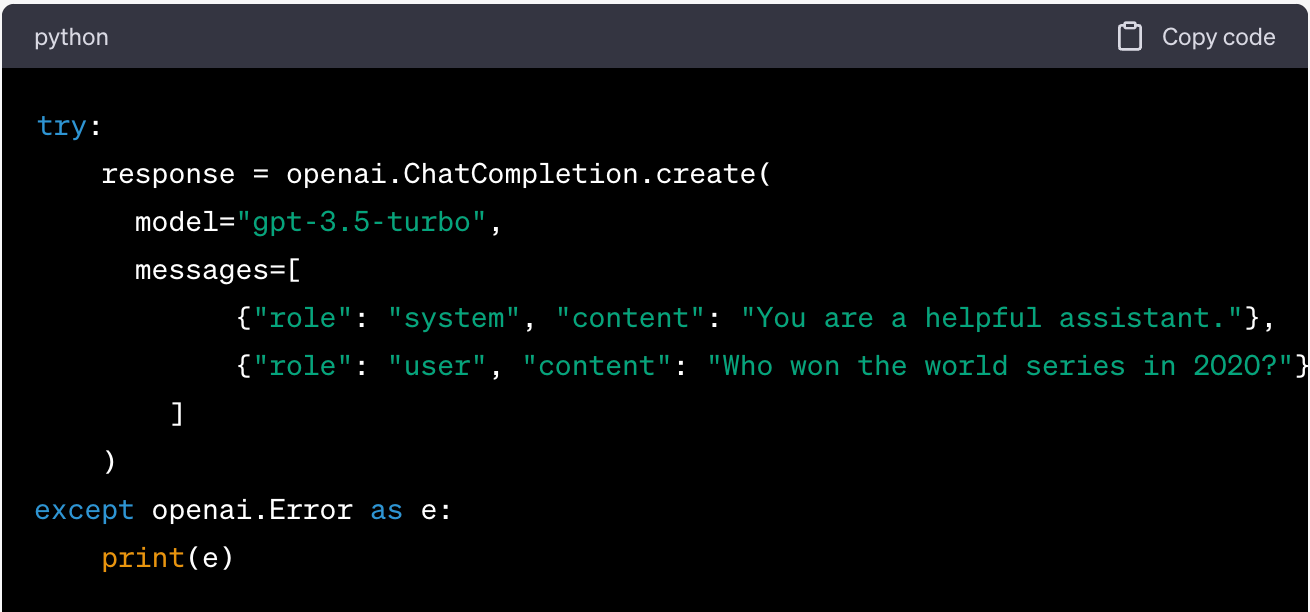

Step 2. Make Your First API Request

For ChatGPT, a typical API request entails sending a series of messages and receiving a model-generated message in response.

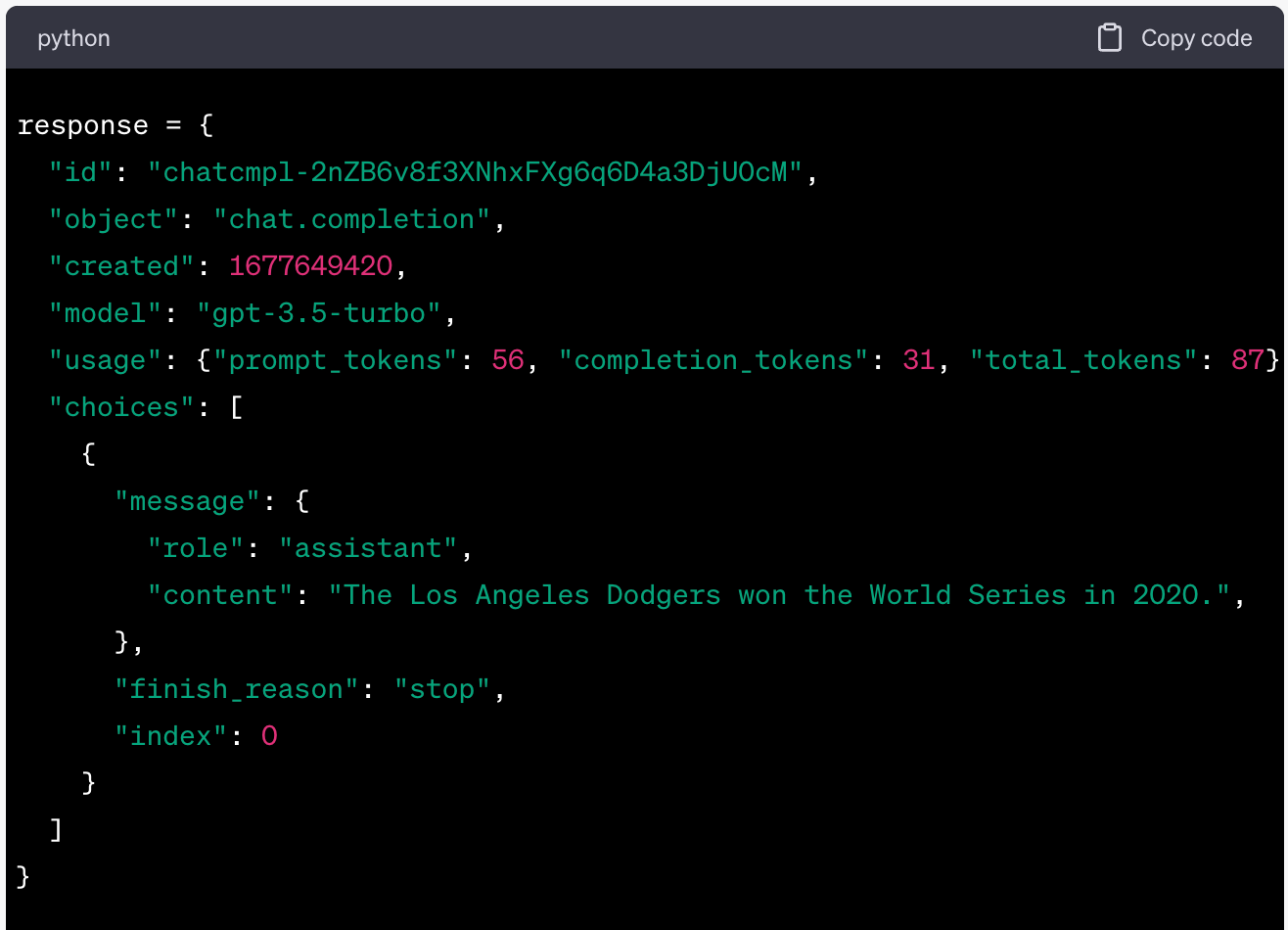

Step 3. Understand the Response Structure

When you make an API call to ChatGPT, you'll receive a response object containing the requested information. This response object includes a 'choices' field, which contains an array of message objects.

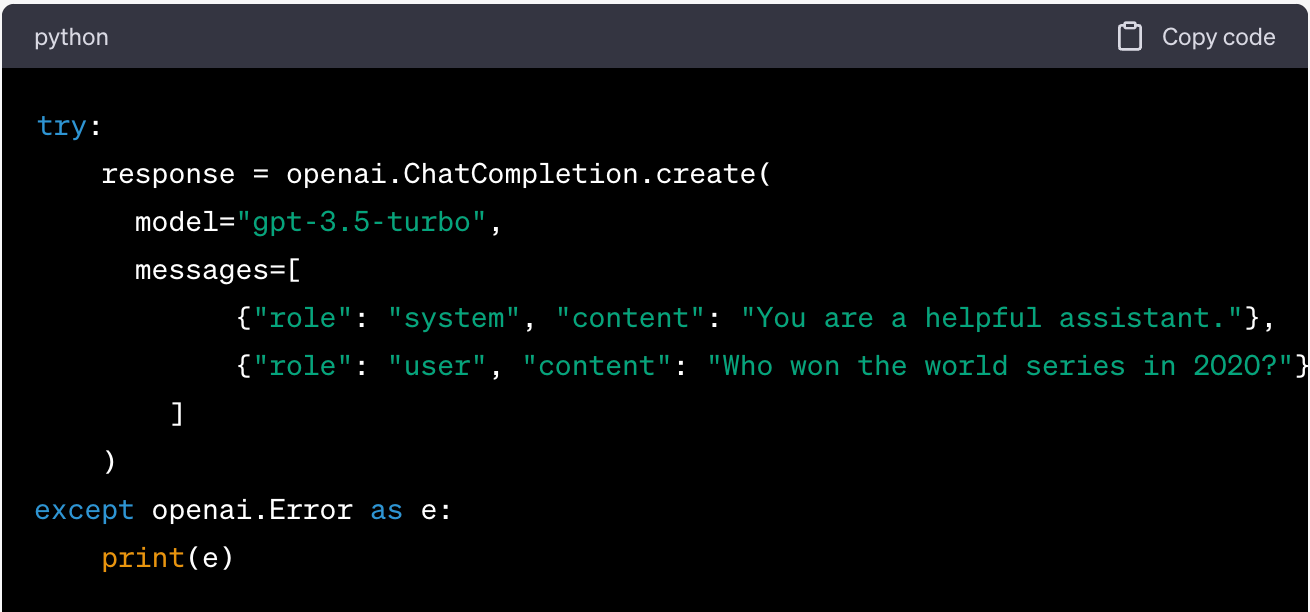

Step 4. Handle Errors and Debug

If an error arises, the API will return an HTTP error status code, alongside a message that provides more details about the issue.

If an error occurs during the API call, the program prints the error message and continues, avoiding a crash.

For debugging, consider printing the entire response object to inspect all its data, which can help verify if your request is correctly formatted and if the API is returning the expected data.

Step 5. Troubleshooting

If you encounter issues with the ChatGPT API, first check your API key. Make sure you've entered it correctly and confirm its validity.

For issues with a specific endpoint or function, refer to the API documentation, which provides in-depth insights into each endpoint, including accepted parameters and practical examples of API usage.

If you've verified your API key and consulted the documentation but still face problems, feel free to reach out to the expert support team at Belitsoft for further assistance.

Use Cases: 5 Famous Apps that Integrated ChatGPT

- Slack, a widely used business messaging platform, was one of the first software applications to integrate ChatGPT. Serving as an internal company collaboration and communication tool, it assists users in composing messages to their colleagues by providing text suggestions that can be customized to fit your needs. Moreover, it leverages AI to summarize entire channels or individual discussion threads, ensuring you stay up-to-date on crucial conversations.

- Shopify harnesses the power of ChatGPT through its companion app, Shop. This AI-enhanced smartphone application works as a customer service chatbot, providing personalized product advice to its users. Upon determining the initial topic, the chatbot prompts further questions to fine-tune the selection of product options. Through this efficient dialogue, the AI can recommend a diverse array of products from the expansive range available across numerous stores on the platform.

- HubSpot, known for its marketing and sales services, is in the process of integrating an AI chat using ChatGPT. This integration aims to empower HubSpot CRM users to extract information from the system and modify records using natural language input alone. An alpha version of this feature, named 'ChatSpot,' will be released in the near future.

- Quizlet, an online learning platform, has integrated the ChatGPT API to introduce a new 'personal learning coach' named 'Q-Chat.' Using Quizlet's extensive library and the Socratic method of questioning, Q-Chat engages with students, asking probing questions that promote a deeper understanding of concepts beyond basic knowledge testing. Currently in beta, Quizlet's Q-Chat is available to users aged 18 and older in the United States.

- Snapchat, the popular social media app, has integrated ChatGPT, also known as 'My AI,' into its messenger service. As explained by Snapchat CEO Evan Spiegel, My AI integrates seamlessly into ongoing conversations with friends, acting as an alternative to the browser window. However, it maintains certain limitations, avoiding engagement in discussions of controversial or explicit content and not generating academic papers to assist with homework.

Frequently Asked Questions

Absolutely! The ChatGPT API allows for a seamless integration of ChatGPT's capabilities into your applications. It grants direct access to ChatGPT's impressive talent for generating human-like responses. This enables you to engage your users in natural, captivating dialogue.

To integrate ChatGPT into your application, you'll need to follow these steps:

- Obtain access: First, you'll need to request and secure access to the ChatGPT API from OpenAI. This usually involves creating an account, generating an API key, and subscribing to the appropriate pricing tier.

- Install required libraries: Next, you'll need to install any necessary libraries. For instance, if you're using Python, you'll need the 'OpenAI' package, which can be installed via pip.

- Make an API call: You'll then use the ChatGPT API to send a message or series of messages to the model and receive a response.

- Handle response: Once you get a response from the model, you'll need to process it according to your application's needs. This could involve extracting the content of the assistant’s message and displaying it in your application.

- Error Handling: Implement a method to gracefully handle any issues that arise when making requests to the ChatGPT API.

- Understand Rate Limiting: Finally, be aware of and handle rate limits. OpenAI may restrict the number of requests you can make to the API within a certain timeframe.

OpenAI has defined rate limits based on different user types to ensure efficient API usage. The following limits are set according to the user category:

- Free trial users: 20 requests per minute (RPM) and 40,000 tokens per minute (TPM)

- Pay-as-you-go users (within the first 48 hours): 60 RPM and 60,000 TPM

Pay-as-you-go users (after the initial 48 hours): 3,500 RPM and 90,000 TPM

- Understanding these rate limits is essential for effective usage of the ChatGPT API.

During a conversation with ChatGPT, each message consumes tokens from the token limit. As the conversation lengthens, the token budget for each individual message decreases. Therefore, effective management of conversation length and complexity is needed to ensure all messages remain within the token limit.

This practice not only maintains optimal performance but also maximizes the model's understanding and responsiveness.

While the ChatGPT API isn't free, it operates on a pay-as-you-go basis. This ensures you're only charged for your actual API usage. And with an affordable price per 1000 tokens, you're getting fantastic value for your money.

It's important to remember that your ChatGPT Plus subscription does not include access to the ChatGPT API. These services are billed separately, each with its own unique pricing structure.

Portfolio

Recommended posts

Our Clients' Feedback

.png)

.jpg)

.jpg)

.jpg)

We have been working for over 10 years and they have become our long-term technology partner. Any software development, programming, or design needs we have had, Belitsoft company has always been able to handle this for us.

Founder from ZensAI (Microsoft)/ formerly Elearningforce